How to Find Malicious Insiders: Tackling Insider Threats Using Behavioral Indicators

- 5 minutes to read

Table of Contents

Insider threats are insidious. Because they work within your network, have access to critical systems and assets, and use known devices—they can be very difficult to detect.

For an in-depth view on how to use both next-gen EDR and UEBA for a comprehensive defense against insider threats, watch this Exabeam webinar with Cybereason.

Here is a digest of the webinar with five important things to know about insider threats

This content is part of a series about insider threats.

Recommended Reading: Security Big Data Analytics: Past, Present and Future.

1) The two types of insider threats

An insider threat is committed by those entrusted to work within an organization’s network. There are two types:

- Compromised insider – An external actor who is using the hacked credentials of an insider to gain access to your systems. When undetected and successful, this hacker can represent a long-term, advanced persistent threat, or APT—using stealth and continuous processes to hack your organization.

- Malicious insider – An employee, contractor, partner, or other trusted individual who has been granted some level of access to your systems. They might be developing a second source of income using your data or network, sabotaging your company, or stealing your IP on the way out the door.

2) Why are insider threats so difficult to detect?

It’s necessary to grant legitimate users access to the resources they need to do their job, whether it’s email, cloud services, or network resources. And of course, some employees must have access to sensitive information like financials, patents, and more.

Insider threats are difficult to find because they use legitimate credentials and known machines, using the privileges you’ve granted. With many security products, their behavior appears normal and doesn’t set off any alarms.

Detecting these threats becomes even more complicated if the attacker performs a lateral movement— changing their credentials, IP address, or devices to hide their tracks and access high value targets.

Read our detailed explainer about detecting insider threats.

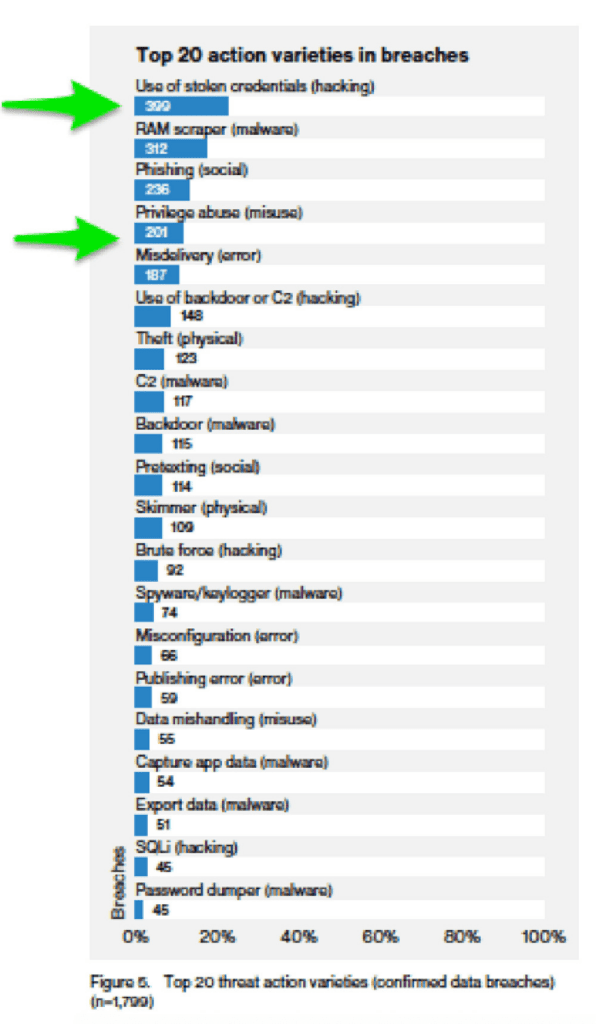

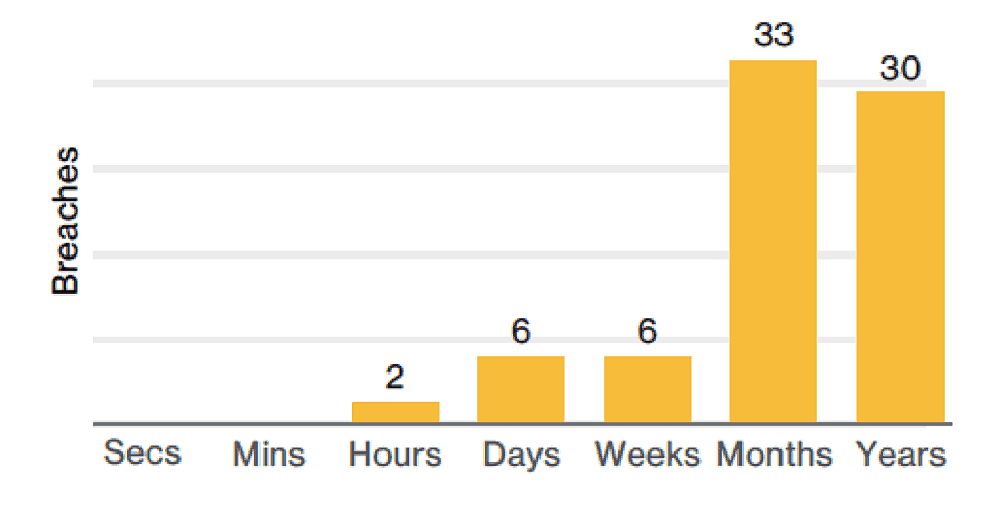

3) How common are insider threats?

Not only are insider threats incredibly common, they frequently go undetected for a long time.

According to last year’s VDBIR report, 39% of the malicious insider breaches they investigated went years before being discovered, and 42% took months.

Tips from the expert

Steve Moore is Vice President and Chief Security Strategist at Exabeam, helping drive solutions for threat detection and advising customers on security programs and breach response. He is the host of the “The New CISO Podcast,” a Forbes Tech Council member, and Co-founder of TEN18 at Exabeam.

In my experience, here are tips that can help you better detect and prevent insider threats using tools like UEBA and next-gen EDR:

Combine UEBA with endpoint detection

Pair User and Entity Behavior Analytics (UEBA) with advanced Endpoint Detection and Response (EDR) tools. UEBA will flag anomalies in user behavior while EDR detects endpoint-specific indicators, giving you a complete view of both user activity and device security.

Set up risk scoring for anomalous behavior

Implement a risk scoring model that assigns points for unusual behavior such as privilege escalation, lateral movement, or data exfiltration. This model should dynamically adjust based on each user’s typical activity, allowing you to escalate suspicious cases faster.

Monitor for lateral movement at the network level

Use network traffic analysis alongside UEBA to detect lateral movement, where an insider or compromised account switches machines or IP addresses. This often leaves subtle traces that can be missed by basic monitoring tools.

Leverage decoy accounts to bait insiders

Create decoy accounts with high privileges but no real access to sensitive data. These accounts can act as honey traps for malicious insiders or compromised accounts, alerting security teams if attempts are made to use them.

Correlate physical and digital behaviors

Track physical access, like badge-in events, and correlate them with digital actions. For example, an insider logging into critical systems from unusual locations or after-hours may indicate a threat.

Deploy machine learning for privilege escalation detection

Use machine learning models to detect anomalous privilege escalations. This includes creating new admin accounts, switching between accounts, or escalating privileges over short periods of time, which may indicate insider threats.

4) Behaviors that point to possible insider threat activity

Insider threats usually occur over time and over multiple network resources. You can find them, if you know where to look.

Here are five behavioral indicators:

- Anomalous Privilege Escalation – This includes creating new privileged or administrative accounts, then switching to that account to perform activities or to exploit application vulnerabilities or logic in order to increase access to a network or application.

- C2 communication – Any traffic or communication to a known command and control domain or IP address. There are very few, if any, legitimate reasons for employees to be accessing these locations.

- Data Exfiltration – This may be digital or physical. Digitally, it may include sensitive information like intellectual property, client lists, or patents being copied to removable devices, attached to emails, or sent to cloud storage. Excessive printing of documents by a user with default names like “document1.doc” is an unusual behavior that may indicate data theft.

- Rapid data encryption – The rapid scanning and subsequent encryption and deletion of files en masse can indicate a ransomware attack. Typically, ransomware comes from a compromised insider, but it also can be performed by rogue, malicious insiders as well.

- Lateral movement – Switching user accounts, machines, or IP addresses (in search of more valuable assets) is a behavior frequently performed during insider attacks. This is difficult to detect because it’s distributed, and usually leaves only faint hints in the logs of various siloed security tools.

Read our detailed explainer about detecting insider indicators.

5) How can you more reliably detect insider threats?

For the single dimensional attacks of the past, like SQL injection, signatures or correlation rules often were an effective means of detection. Today, insider threat attacks spin multiple identities and machines into a tangled web. These attacks involve trusted parties and span months or years. For these protracted assaults, it’s not possible to create a trigger or signature that will suffice. However, insider threats can be detected via another means: behavioral analysis.

Enter UEBA – User and Entity Behavioral Analysis

UEBA detects threats by using data science and machine learning to determine how machines and humans normally behave, then finding risky and anomalous activity that deviates from that norm. Each time anomalous behavior is detected, points of risk are added to a risk score until the user or machine crosses a threshold and is escalated to a security analyst for review.

Why is this a more effective approach?

- Context – By mapping how a user or machine normally operates, it takes into account what is normal for that user. If they are part of marketing, then their activities will be different than someone in accounting. The baseline that UEBA builds includes this context, which helps to improve accuracy in detection.

- Holistic Analysis – UEBA is able to ingest data from any type of security tool and model it together with other contextual data such as active directory or CMDB. This means you can see the complete picture of an attack, instead of just siloed pieces of a larger puzzle.

- Future Proof – UEBA looks for abnormality, even if the attack that’s unfolding has never been seen before. This means that there is no need to obtain new signatures, or constantly create and update rule sets.

Learn more about insider threats:

- What Is an Insider Threat? Understand the Problem and Discover 4 Defensive Strategies

- Insider Threat Indicators: Finding the Enemy Within

- How to Find Malicious Insiders: Tackling Insider Threats Using Behavioral Indicators

- Crypto Mining: A Potential Insider Threat Hidden In Your Network

Learn More About Exabeam

Learn about the Exabeam platform and expand your knowledge of information security with our collection of white papers, podcasts, webinars, and more.

-

Blog

Blog

Five Reasons Security Operations Teams Augment Microsoft Sentinel With New-Scale Analytics

- Show More