- Home >

- Blog >

- SIEM Trends

Recreating an Incident Timeline–a Manual vs. Automated Process, Part 2

- Nov 07, 2019

- Erik Randall

- 5 minutes to read

Table of Contents

In my previous post I wrote about my experiment to manually recreate an incident timeline and started with the many questions a security analyst has to ask every day when investigating an incident. It’s laborious and difficult to sort the truth from the false positives. In this post, I will walk you through how to build a timeline using a manual investigation and look at a better way of doing this.

Analyzing the timeline

Depending on the regularity of logging in production environments you can expect to see hundreds or thousands of websites being visited daily by any given user. A single page visit to a busy site such as CNN.com pulls content from many different sources and could generate 50, 60…100 different URLs. An analyst does not necessarily need to look at every URL; a smaller number of queries are generally sufficient to draw a conclusion. By narrowing our attention to activities that are actually human-driven, we could probably slice up the time frames to zero in on key points of interest.

I ran 16 queries for Weber’s listed processes, and a further 18 queries for related web activity–arguably a conservative number. Going back to his timeline, it looks like the risky behavior started occurring when he first hit the “zoomer.cn” domain. That’s the time slice I want to examine more closely—looking five minutes before and after he hit that site.

In a production environment you will likely see hundreds or even thousands of domains here with perhaps 100 to 200+ results for each one you’d have to assess. The most frequently visited sites tend to be those that are more legitimate and don’t present a security risk. SOC analysts care about those URLs that require additional queries to zero in—e.g., the zoomer.cn anomaly.

More queries

Now we’ve analyzed some benign process executions followed by a suspicious URL visit. Next I look for how the URL affected the endpoint and how it may have been compromised. The initial malware alert flagged the barbarian.jar file so we will want to explore that. As I consider other suspicious behaviors that may indicate a compromise, I want to know which processes were executed on the host. Here we see on Weber’s laptop that the endpoint security product alerted on barbarian.jar, something it determined was malware.

We know that often the first malware component to land on a host is a “dropper.” Its purpose is to fetch the next malware component that burrows deeper into the system, embedding itself into the system in an effort to avoid detection and gain persistence. So did the endpoint security product completely remediate barbarian.jar? Did it stop it from executing? Delete it off the system? Clean up all of the artifacts? Or did it miss anything?

Still more queries are required to complete our response to this incident. If the endpoint security product only partially removed the malware, then we likely need to manually remediate this endpoint by surgically removing the remaining components that were left behind. In any case, we need to be completely certain the malware hasn’t gained persistence on Weber’s system, potentially threatening our enterprise network.

To confirm the malware has been completely remediated, I run some additional queries to understand what we can tell the malware did to the endpoint, as well as look at other intel that is known about this threat and check if that jives with what we observed in the barbarian.jar file we encountered. This often involves interacting with the system directly and looking for other evidence of persistence as well as quarantining the system and observing its behavior over a period of time.

The ever-expanding investigation

The questions I’m immediately trying to answer are, “Was this a successful attack? Is it spreading? Is the host still actively infected?” This is where triage begins.

The next piece of investigation is directed at the websites Weber visited.

- What were those sites?

- What led him to that zoomer domain?

- Did he respond to a phishing email?

- Or was this event just a drive-by?

- Was he on some other website where a malvertisement took him to the zoomer site?

- What can we learn from this incident that can help us going forward?

The final step is to undertake a root cause analysis. We need to understand the infection vector at a granular level—not just websites that were visited, but what specifically can we do to improve our web filtering? Should that website be categorized differently? How else can we harden the host against a similar attack? Might there be there some functionality within the browser, or the system itself, that can help harden it?

Now expanding to the scope of the attack, we need to ask: Are other users, endpoints, and additional network resources affected? For example, is the vssadmin.exe process normal for the environment? It frequently shows up in ransomware cases, where shadow copies are deleted to eliminate the possibility of recovering data.

The investigation summary

In short, here’s what it took to investigate this incident using a standard “query and pivot” methodology.

Summary of Results

- 96 queries (62 required in the demo, 34 more added for additional processes and URLs)

- 12.9 minutes each (average, includes human analysis time)

- 1238.4 minutes expended => 20.64 hours

Assumptions

The legacy SIEM is reasonably tuned and has 60 days of historical data available.

Estimated time for queries were

- 1-day queries return in 2.9 minutes

- 60-day queries take 47 minutes each

Analysis time

I added 10 minutes analysis after each query to account for documenting results, determining next steps and crafting the next query.

The Exabeam approach

In the simulation, I show the legacy SIEM approach to investigating an incident whereby an analyst (or multiple analysts) must piece together a timeline of events involved to demonstrate what actually transpired during the course of a compromise. With Exabeam, these timelines are pre-built, for every user and every session each day. Additionally, Exabeam Smart Timelines include information about deviations from normal activity for the user, their peer groups and the organization as a whole—all of this processed through risk rules that add risk points to anomalous and risky activity which points the analyst right to the areas of interest without having to hunt through piles of raw log data.

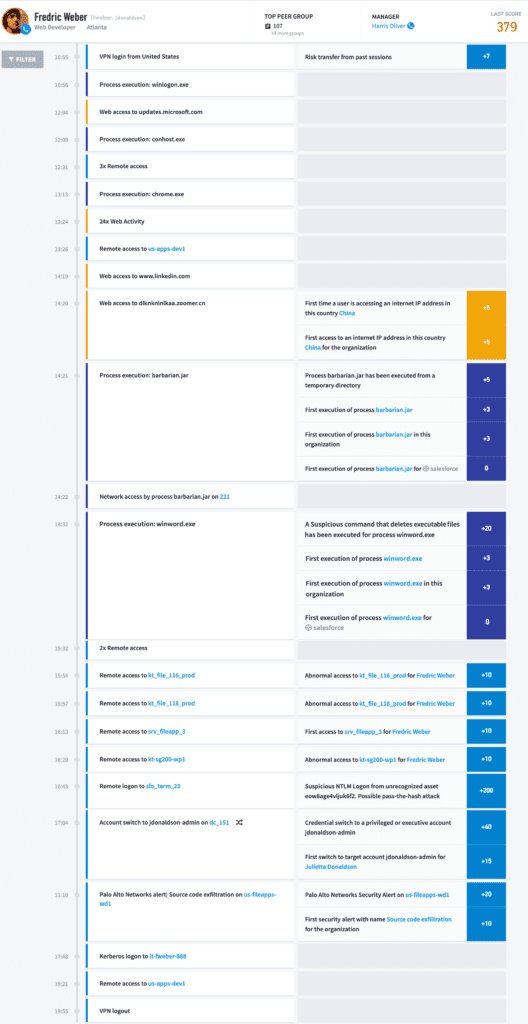

You can see what was previously a manual process in Frederick Weber’s Smart Timeline (Figure 5), that required analysts to piece together which events are relevant to the incident and which are not, is now automated in Exabeam’s Advanced Analytics solution.

As you can see, the analyst’s attention is drawn to the events with risk scores associated and we can filter this view to only show those particular events—cutting right to the quick.

Exabeam solves the puzzle for analysts, improving response times and empowering the team to focus on what’s important—responding to the incident rather than spending precious time hunting through raw logs and trying to make sense of machine data.

Want to learn more about what Exabeam can do?

Have a look at these articles:

Erik Randall

Senior Sales Engineer | Exabeam | Erik Randall is a Senior Security Engineer at Exabeam. His specialties include security service management, systems architecture, network design, and systems administration with extensive experience in engineering, manufacturing, services, and financial industries. Prior to joining Exabeam, he was a Global Manager for the Cybersecurity Operations Center at ITT Inc., where he co-founded and managed the global SOC, overseeing daily incident response activities, as well as provided strategic direction of enterprise cyber defense capabilities. He graduated from the Rochester Institute of Technology.

More posts by Erik RandallLearn More About Exabeam

Learn about the Exabeam platform and expand your knowledge of information security with our collection of white papers, podcasts, webinars, and more.