Table of Contents

In this SIEM Explainer, we explain how SIEM systems are built, how they go from raw event data to security insights, and how they manage event data on a huge scale. We cover both traditional SIEM platforms and modern SIEM architecture based on data lake technology.

Security information and event management (SIEM) platforms collect log and event data from security systems, networks and computers, and turn it into actionable security insights. SIEM technology can help organizations detect threats that individual security systems cannot see, investigate past security incidents, perform incident response and prepare reports for regulation and compliance purposes.

This content is part of a series about Security information and event management (SIEM).

12 Components and Capabilities in a SIEM Architecture

Threat Intelligence

Collects and aggregates data from security systems and network devices.

Threat Intelligence Feeds

Combines internal data with third-party data on threats and vulnerabilities.

Correlation and Security Monitoring

Links events and related data into security incidents, threats or forensic findings.

Analytics

Uses statistical models and machine learning to identify deeper relationships between data elements.

Alerting

Analyses events and sends alerts to notify security staff of immediate issues.

Dashboards

Creates visualizations to let staff review event data, identify patterns and anomalie.

Compliance

Gathers log data for standards like HIPAA, PCI/DSS, HITECH, SOX and GDPR and generates reports.

Retention

Stores long-term historical data, useful for compliance and forensic investigations.

Forensic Analysis

Enables exploration of log and event data to discover details of a security incident.

Threat Hunting

Enables security staff to run queries on log and event data to proactively uncover threats.

Incident Response

Helps security teams identify and respond to security incidents, bringing in all relevant data rapidly.

SOC Automation

Advanced SIEMs can automatically respond to incidents by orchestrating security systems in an approach known as security orchestration and response (SOAR).

SIEM Logging Process

A SIEM server, at its root, is a log management platform. Log management involves collecting the data, managing it to enable analysis, and retaining historical data.

Data Collection

SIEMs collect logs and events from hundreds of organizational systems (for a partial list, see Log Sources below). Each device generates an event every time something happens, and collects the events into a flat log file or database. The SIEM can collect data in four ways:

- Via an agent installed on the device (the most common method)

- By directly connecting to the device using a network protocol or API call

- By accessing log files directly from storage, typically in Syslog format

- Via an event streaming protocol like SNMP, Netflow or IPFIX

The SIEM is tasked with collecting data from the devices, standardizing it and saving it in a format that enables analysis.

Next-generation SIEMs come pre-integrated with common cloud systems and data sources, allowing you to pull log data directly. Many managed cloud services and SaaS applications do not allow you to install traditional SIEM collectors, making direct integration between SIEM and cloud systems critical for visibility.

Data Management

SIEMs, especially at large organizations, can store mind-boggling amounts of data. The data needs to be:

- Stored – Either on-premises, in the cloud or both

- Optimized and Indexed – To enable efficient analysis and exploration

- Tiered – Hot data necessary for live security monitoring should be on high-performance storage, whereas cold data, which you may one day want to investigate, should be relegated to high-volume inexpensive storage mediums

Next-gen SIEM – Next-generation SIEMs are increasingly based on modern data lake technology such as Amazon S3, Hadoop or ElasticSearch, enabling practically unlimited data storage at low cost.

Log Retention

Industry standards like PCI DSS, HIPAA and SOX require that logs be retained for between 1 and 7 years. Large enterprises create a very high volume of logs every day from IT systems (see SIEM Sizing below). SIEMs need to be smart about which logs they retain for compliance and forensic requirements. SIEMs use the following strategies to reduce log volumes:

- Syslog Servers – Syslog is a standard which normalizes logs, retaining only essential information in a standardized format. Syslog lets you compress logs and retain large quantities of historical data.

- Deletion Schedules – SIEMs automatically purge old logs that are no longer needed for compliance. By accessing log files directly from storage, typically in Syslog format.

- Log Filtering – Not all logs are needed for the compliance requirements faced by your organization, or for forensic purposes. Logs can be filtered by the source system, times, or by other rules defined by the SIEM administrator.

- Summarization – Log data can be summarized to maintain only important data elements such as the count of events, unique IPs, etc.

Next-gen SIEM – Historic logs are not only useful for compliance and forensics. They can also be used for deep behavioral analysis. Next-generation SIEMs provide user and entity behavior analytics (UEBA) technology, which uses machine learning and behavioral profiling to intelligently identify anomalies or trends, even if they weren’t captured in the rules or statistical correlations of the traditional SIEMs.

Next-generation SIEMs leverage low-cost distributed storage, allowing organizations to retain full source data. This enables deep behavioral analysis of historic data, to catch a broader range of anomalies and security issues.

Tips from the expert

Steve Moore is Vice President and Chief Security Strategist at Exabeam, helping drive solutions for threat detection and advising customers on security programs and breach response. He is the host of the “The New CISO Podcast,” a Forbes Tech Council member, and Co-founder of TEN18 at Exabeam.

In my experience, here are tips that can help you build and maintain a robust SIEM architecture to maximize its capabilities:

Integrate identity-centric data

Include identity logs (from Active Directory, Okta, etc.) to enrich incident analysis. This enables better detection of lateral movement, privilege escalation, and anomalous access patterns.

Streamline log source prioritization

Identify high-priority systems and log sources based on risk profiles and regulatory importance. Ensure these critical sources are ingested and parsed first to prevent log overload during high EPS (events per second) scenarios.

Regularly validate data integrity

Establish mechanisms to check the completeness and accuracy of ingested logs. Missing or corrupted logs can hinder both real-time monitoring and forensic investigations.

Employ intelligent data tiering

Use a multi-tiered storage strategy to optimize cost and performance. For example, store active investigation data in hot storage while archiving compliance logs in cheaper, slower storage solutions.

Create dynamic thresholds for alerts

Static thresholds can lead to alert fatigue or missed incidents. Use dynamic, contextual thresholds that adjust based on time, user behavior, and system activity to increase detection accuracy.

The Log Flow

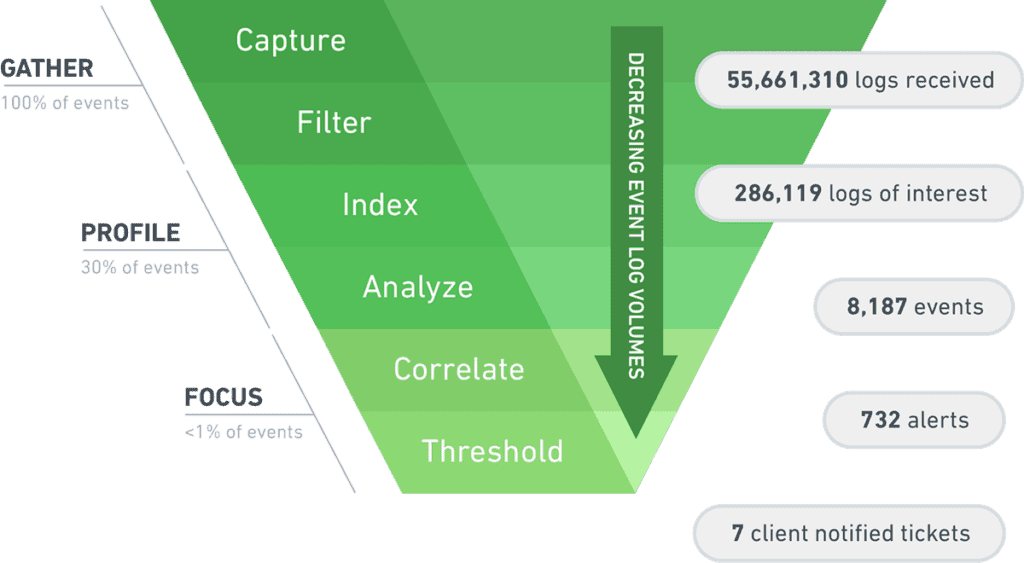

A SIEM captures 100 percent of log data from across your organization. But then data starts to flow down the log funnel, and hundreds of millions of log entries can be whittled down to only a handful of actionable security alerts.

SIEMs filter out noise in logs to keep pertinent data only. Then they index and optimize the relevant data to enable analysis. Finally, around 1% of data, which is the most relevant for your security posture, is correlated and analyzed in more depth. Of those correlations, the ones which exceed security thresholds become security alerts.

SIEM Integrations

SIEM platforms integrate with a large variety of security and organizational data sources, and can parse, aggregate and analyze the data for security significance. Here are just a few examples of data sources.

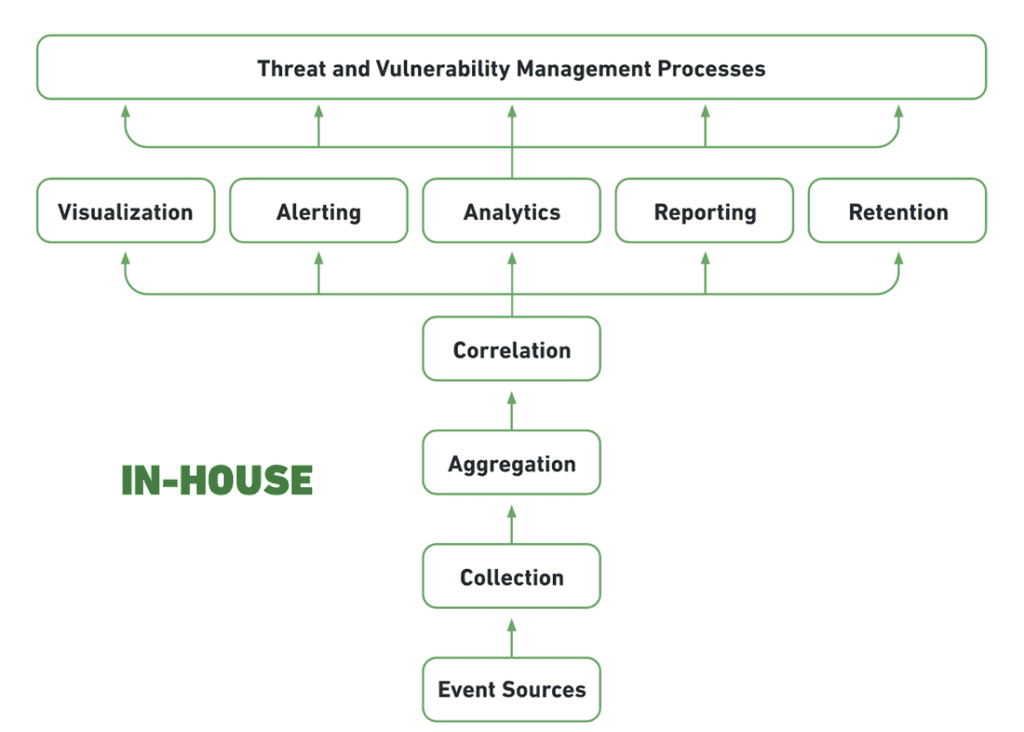

Self-Hosted, Self-Managed

This is the traditional SIEM deployment model—host the SIEM in your data center, often with a dedicated SIEM appliance, maintain storage systems, and manage it with trained security personnel. This model made SIEM a notoriously complex and expensive infrastructure to maintain.

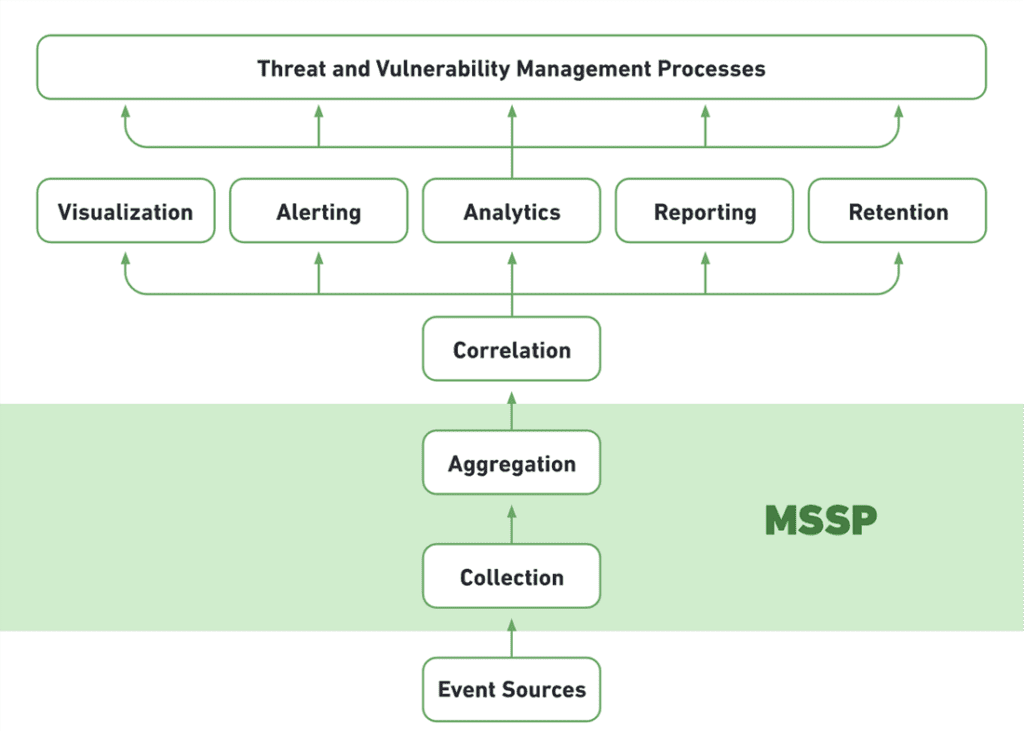

Cloud SIEM, Self Managed

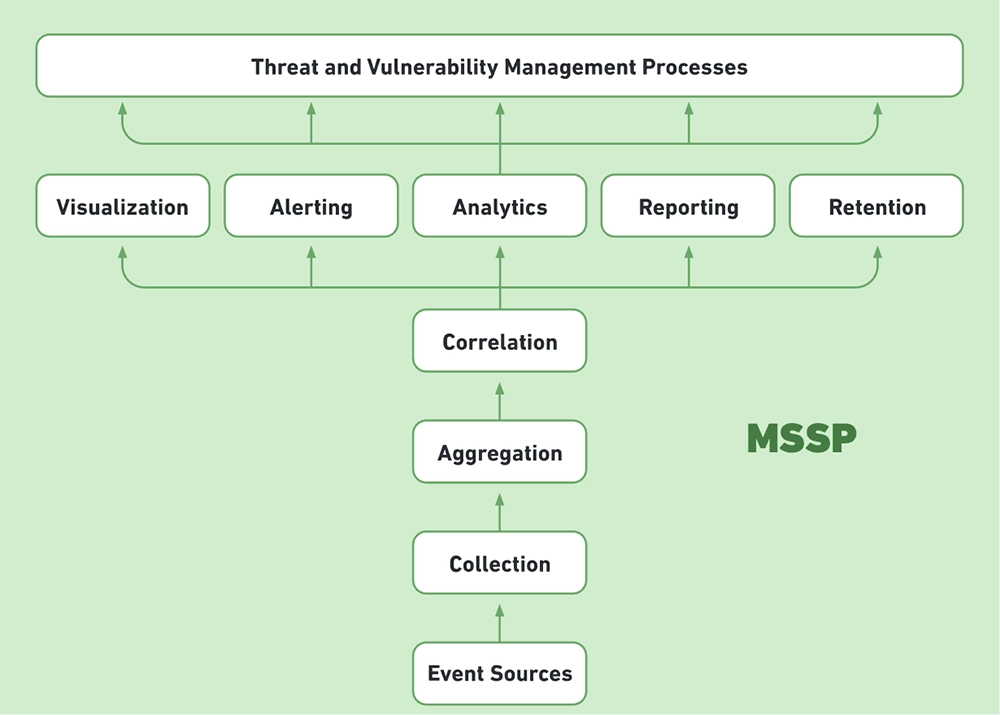

MSSP Handles: Receiving events from organizational systems, collection and aggregation.

You Handle: Correlation, analysis, alerting and dashboards, security processes leveraging SIEM data.

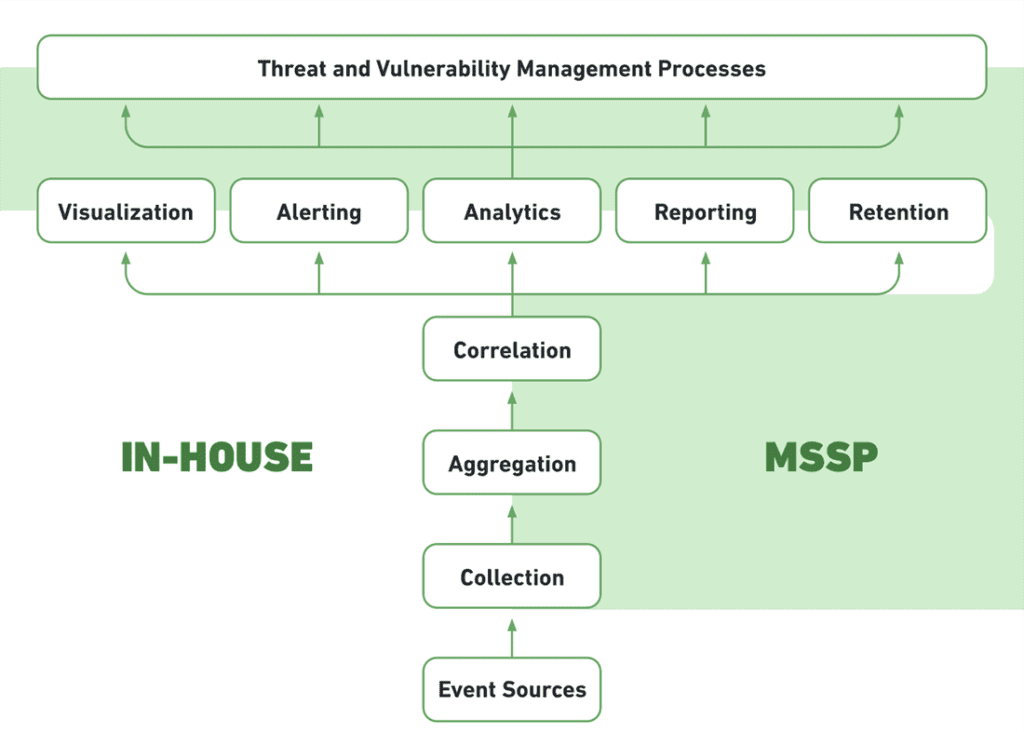

Self-Hosted, Hybrid-Managed

You Handle: Purchasing software and hardware infrastructure.

MSSP Together with Your Security Staff: Deploys SIEM event collection / aggregation, correlation, analysis, alerting and dashboards.

SIEM as a Service

MSSP Handles: Event collection, aggregation, correlation, analysis, alerting and dashboards.

You Handle: Security processes leveraging SIEM data.

Which Hosting Model is Right for You?

The following considerations can help you select a SIEM deployment model:

- Do you have an existing SIEM infrastructure? If you’ve already purchased the hardware and software, opt for self-hosted self-managed, or leverage an MSSP’s expertise to jointly manage the SIEM with your local team.

- Are you able to move data off-premises? If so, a cloud-hosted or fully managed model can reduce costs and management overhead.

- Do you have security staff with SIEM expertise? The human factor is crucial in getting true value from a SIEM. If you don’t have trained security staff, rent the analysis services via a hybrid-managed or SIEM-as-a-Service model.

SIEM Sizing: Velocity, Volume and Hardware Requirements

A majority of SIEMs today are deployed on-premises. This requires organizations to carefully consider the size of log and event data they are generating, and the system resources required to manage it.

Calculating Velocity: Events Per Second (EPS)

A common measure of velocity is events per second (EPS), defined as: # of Security Events divided by Time Period ins Seconds = EPS

EPS can vary between normal and peak times. For example, a Cisco router might generate 0.6 events per second on average, but during peak times, such as during an attack, it can generate as many as 154 EPS.

According to the SIEM Benchmarking Guide by the SANS Institute, organizations should strike a balance between normal and peak EPS measurements. It’s not practical, or necessary, to build a SIEM to handle peak EPS for all network devices, because it’s unlikely all devices will hit their peak at once. On the other hand, you must plan for crisis situations, in which the SIEM will be most needed.

A Simple Model for Predicting EPS During Normal and Peak Times

- Measure Normal EPS and Peak EPS, by looking at 90 days of data for the target system

- Estimate the Number of Peaks per Day

- Estimate the Duration in Seconds of a Peak, and by extension, Total Peak Seconds Per Day

- Calculate Total Peak Events per Day = (Total Peak Seconds Per Day) * Peak EPS

- Calculate Total Normal Events per Day = (Total Seconds – Total Peak Seconds Per Day) * Normal EPS

The sum of these two numbers is the total estimated velocity. In addition, the SANS guide recommends adding:

- 10% for headroom

- 10% for growth

So that the final number of events Per Day will be:

(Total Peak Events per Day + Total Normal Events Per Day) * 110% headroom * 110% growth

Calculating Velocity: Events Per Second (EPS)

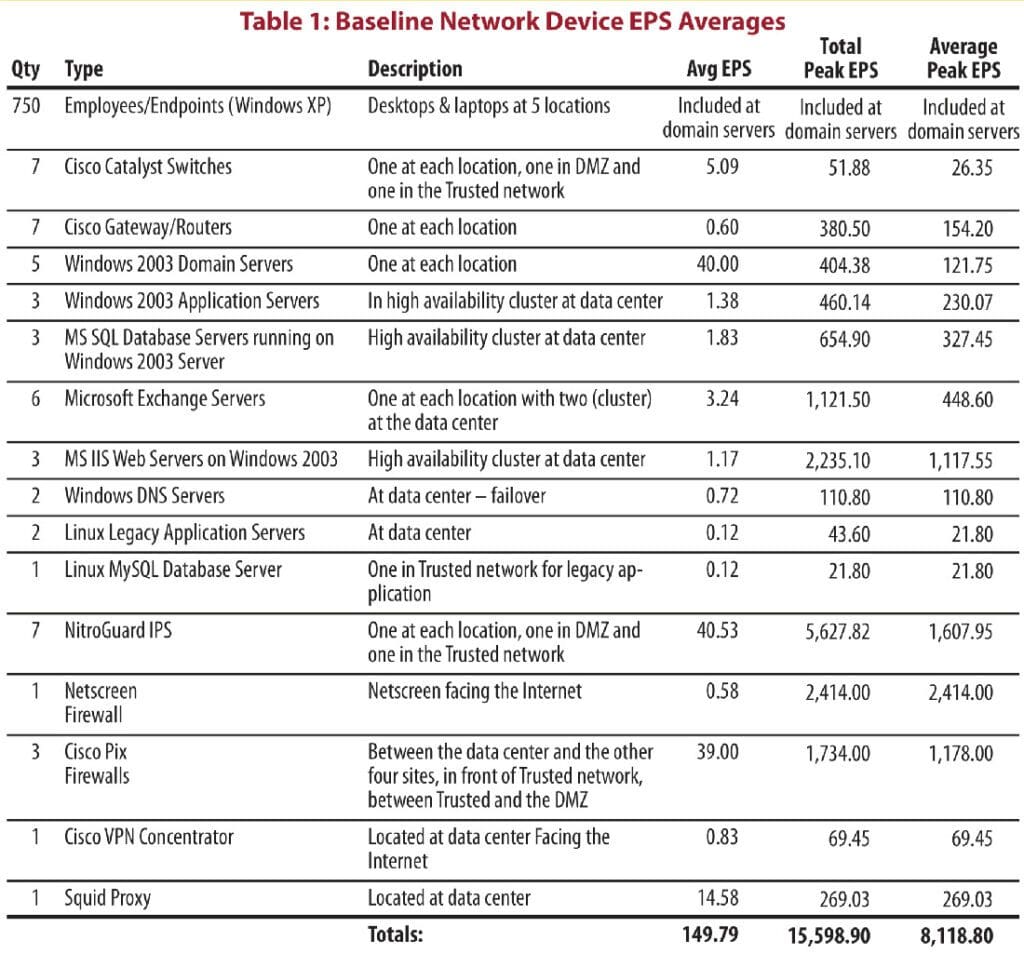

The following table, provided by SANS, shows typical Average EPS (normal EPS) and Peak EPS for selected network devices. The data is several years old but can provide ballpark figures for your initial estimates.

To size your SIEM, conduct an inventory of the devices you intend to collect logs from. Multiply the number of similar devices by their estimated EPS, to get a total number of Events Per Day across your network.

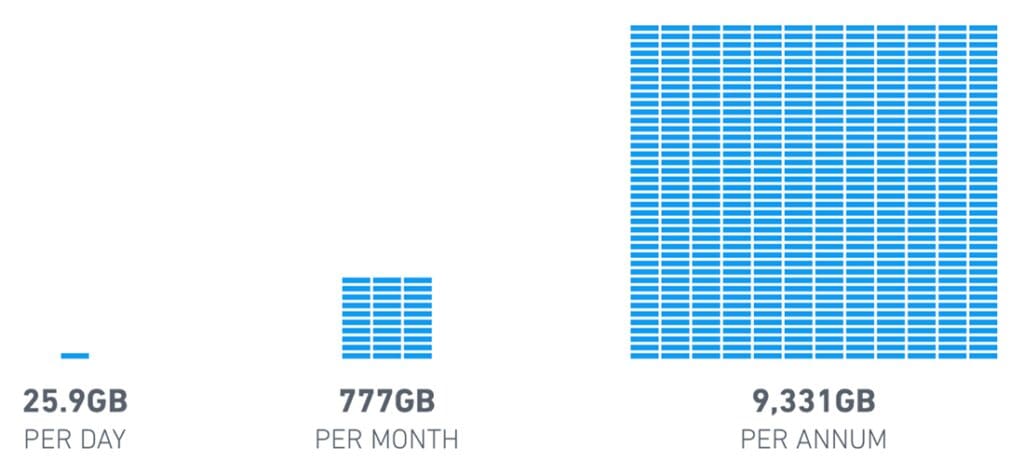

Storage Needs

A rule of thumb is that an average event occupies 300 bytes. So for every 1,000 EPS (86.4 million Events Per Day), the SIEM needs to store:

Hardware Sizing

After you determine your event velocity and volume, consider the following factors to size hardware for your SIEM:

- Storage format – how will files be stored? Using a flat file format, a relational database or an unstructured data store like Hadoop?

- Storage deployment and hardware – is it possible to move data to the cloud? If so, cloud services like Amazon S3 and Azure Blob Storage will be highly attractive for storing most SIEM data. If not, consider what storage resources are available locally, and whether to use commodity storage with Hadoop or NoSQL DBs, or high-performance storage appliances.

- Log compression – what technology is available to compress log data? Many SIEM vendors advertise compression ratios of 1:8 or more.

- Encryption – is there a need to encrypt data as it enters the SIEM data store? Determine software and hardware requirements.

- Hot storage (short-term data) – needs high performance to enable real time monitoring and analysis.

- Long-term storage (data retention) – needs high volume, low cost storage media to enable maximum retention of historic data.

- Failover and backup – as a mission critical system, the SIEM should be built with redundancy, and be backed with a clear business continuity plan.

Scalability and Data Lakes

In the past decade, networks have grown, the number of connected devices has exploded, and data volumes have risen exponentially. In addition, there is a growing need to have access to all historical data—not just a filtered, summarized version of the data—to enable deeper analysis. Modern SIEM technology can make sense of huge volumes of historic data and use it to discover new anomalies and patterns.

In 2015 O’Reilly released a report named The Security Data Lake, which offered a robust approach for storing SIEM data in a Hadoop data lake. The report clarifies that data lakes do not replace SIEMs—the SIEM is still needed for its ability to parse and make sense of log data from many different systems, and later analyze and extract insights and alerts from the data.

The data lake, as a companion to a SIEM, provides:

- Nearly unlimited, low cost storage based on commodity devices.

- New ways of processing big data—tools in the Hadoop ecosystem, such as Hive and Spark, enable fast processing of huge quantities of data, while enabling traditional SIEM infrastructure to query the data via SQL.

- The possibility of retaining all data across a multitude of new data sources, like cloud applications, IoT and mobile devices.

Today additional technical options exist for implementing data lakes, besides the heavyweight Hadoop—including ElasticSearch, Cassandra and MongoDB.

Next-gen SIEM – Another benefit of data lake storage is that hardware costs become predictable. You can simply add nodes to the data lake, running on commodity or cloud hardware, to grow data storage linearly. SIEMs based on data lake technology can easily add new data sources or expand data retention at low cost.

SIEM Architecture: Then and Now

Historically, SIEMs were expensive, monolithic enterprise infrastructures, built with proprietary software and custom hardware provisioned to handle its large data volumes. Along with the software industry in general, SIEMs are evolving to become more agile and lightweight, and much smarter than they were before.

Next-generation SIEM solutions use a modern architecture that is more affordable, easier to implement, and helps security teams discover real security issues faster:

- Modern data lake technology – offering big data storage with unlimited scalability, low cost and improved performance.

- New managed hosting and management options – MSSPs are helping organizations implement SIEM, by running part of the infrastructure (on premises or on the cloud), and by providing expertise to manage security processes.

- Dynamic scalability and predictable costs – SIEM administrators no longer need to meticulously calculate sizing, and make architectural changes when data volumes grow. SIEM storage can now grow dynamically and predictably when volumes increase.

- Enrich data with context – This is essential to filter out false positives in the SIEM solution to analyze data and be able to effectively detect and respond to real threats.

- New insights with User and Entity Behavior Analytics (UEBA) – SIEM architectures today include advanced analytics components such as machine learning and behavioral profiling, which go beyond traditional correlations to discover new relationships and anomalies across huge data sets. Read more in our chapter on UEBA.

- Powering incident response – Modern SIEMs leverage Security Orchestration and Automation (SOAR) technology that helps identify and automatically respond to security incidents, and supports incident investigation by Security Operation Center staff. Read more in our chapter on incident response.

Read about Exabeam’s Security Intelligence Platform.

Learn More About Exabeam

Learn about the Exabeam platform and expand your knowledge of information security with our collection of white papers, podcasts, webinars, and more.