-

- Home

>

-

- Blog

>

-

- InfoSec Trends

Decoding the 2025 MITRE ATT&CK® Evals: A Call for Clarity and a Guide for Analysts

- Jan 13, 2026

- Brook Chelmo

- 5 minutes to read

Table of Contents

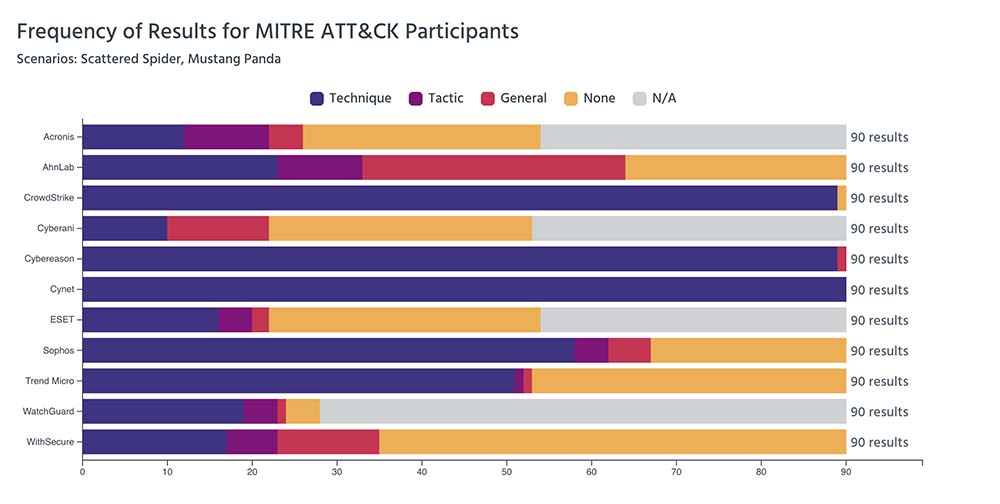

The latest MITRE ATT&CK® Enterprise Evaluations are out, featuring scenarios that emulate sophisticated actors like Scattered Spider and Mustang Panda. While every release of the findings is a significant event for the security community, this year’s evaluation highlights both new and recurring concerns for security professionals.

A Call for Clarity and Community

After years in the EDR/XDR industry, I’ve observed two concerning trends in the ATT&CK Evaluations.

First, vendor participation has dwindled from nearly 30 in past rounds to just 11 this year. This is a loss for customers, vendors, and the entire security community. As a company that integrates with all EDR vendors, we know their data is invaluable to the broader security narrative. It’s time to bring everyone back to the table. We all benefit from a deeper understanding of how these security clients behave, from their use of heuristics to their reliance on threat intelligence.

Second, the presentation of the results changes from year to year, making them difficult for even seasoned professionals to interpret. This inconsistency fuels the spread of misinformation based on cherry-picked data. The inaccessibility of the raw JSON file at the time of writing only compounds the issue. In its current state, each vendor interprets the data like a Rorschach test.

To restore clarity and authority, the MITRE ATT&CK Foundation should make the results more readable for the casual observer and host a webcast on release day to present its official findings.

The High-Stakes Game of EDR Evaluations

Why are vendors stepping back? These evaluations are high-pressure, high-stakes events. A great result offers a golden marketing opportunity; a poor one means months of damage control. This pressure exists because many customers accept vendor marketing claims without digging into the nuances of the results.

Participating also requires significant investment in engineering resources. Teams spend months preparing their product and working with the MITRE ATT&CK Foundation to interpret results and make configuration changes during the test. The process can feel like an 18th-century debutante ball. Everyone arrives in their finest attire, hoping to win favor.

This pressure often leads to a “MITRE Mode,” a version of the product specially tuned for the test, rather than the standard, “off-the-shelf” version a customer would use. For example, during the Turla evaluation in 2023, many vendors incorporated a leaked version of MITRE’s testing tool into their threat intelligence modules. Many are also accused of deploying resource-intensive configurations that would be unacceptable in a real-world environment.

These evaluations could foster greater transparency by adding a performance impact as a metric, ensuring high-fidelity detection does not come at the cost of business operations. This would be similar to how AVTest evaluates performance and false positives. Another step would be to test an off-the-shelf version from a reseller, a practice proven effective by researchers at the University of Piraeus in Greece.

MITRE must address the perception that some vendors are “cheating,” which discourages others who can’t commit similar resources from participating.

13 Tips to Be Your Own Analyst

Selecting a vendor based on a small sample of tests is ill advised. The evaluations break down only two malware variants into individual attacks, which does not accurately reflect how a product will perform in your environment or against other unknown threats. It’s like choosing a family dog based on which breed won the last Westminster agility test.

Use these 13 tips to interpret the results and select the right EDR vendor for your organization.

| Tip | How to Apply It |

| 1. Prioritize “Technique” Detections | Look for vendors at the “Technique” level, which identify the attacker’s specific goal. This provides richer context than generic alerts and shows a deeper understanding of behavior, not just signatures. |

| 2. Check for Detection Delays | The evaluations measure the time between an event and its detection. An alert delayed by minutes or hours is less useful for real-time response. Prioritize vendors who provide timely, actionable alerts. |

| 3. Follow the Kill Chain | Every EDR in the evaluation will stop these attacks; the key is understanding where. Some block initial access while others excel at deep visibility later. One will align better with your security philosophy. |

| 4. Look for Configuration Changes | This reveals when a vendor adjusted the product mid-test to improve a detection. Frequent changes suggest a “MITRE Mode” tuned for the lab, not a product ready for your environment. |

| 5. Test It Yourself | Confirm the evaluation results with a proof-of-concept test in your own environment. Use malware from public repositories to check both static and dynamic defenses against the latest threats in a safe setting. |

| 6. Test Response Options | Validate how automated response actions behave under pressure. Test network containment, endpoint isolation, process termination, file rollback, and integrated credential resets. Some tools delay execution or require extra approvals; you need to know how quickly and consistently the system reacts to contain a threat. |

| 7. Evaluate Total Cost of Ownership | Licensing is just one part of the cost. Factor in data ingestion, storage tiers, optional add-ons, required infrastructure, and potential security operations workload. A tool that reduces analyst effort can even offset a higher initial price. The right question isn’t “What does it cost?” but “What does it cost to run this every day for three years?” |

| 8. Measure Agent Performance | An EDR agent must provide prevention, protection, and visibility without becoming a burden. Test its impacts on CPU, memory, and application performance. Pay attention to agent stability to avoid crashes or conflicts. A high-fidelity detection engine is useless if it slows endpoints or breaks workflows. |

| 9. Check Interoperability | Ensure the EDR works alongside your IT agents, VPN clients, and DLP tools. Conflicts at the kernel level can cause instability that is hard to diagnose. Choose a vendor that demonstrates compatibility in real deployments, not just in documentation. |

| 10. Validate Stack Integration | An EDR must enrich your ecosystem it supports. Look for native integrations with your SIEM, SOAR, ticketing platform, identity provider, and cloud services. Verify it shares meaningful context and accelerates your TDIR rhythm, not forces a rebuild. |

| 11. Understand Security Operations Needs | Determine the true operational lift. How many analysts does it take to operate the product efficiently? What skills do they need? What does daily life looks like with it running at scale? A stress test will reveal if a tool simplifies investigations or becomes another inbox to babysit. |

| 12. Scrutinize False Positives | High false positive rates drain analyst time, erode trust in the tool, and lead to real threats being ignored. Ask for real-world rates, not lab claims. Review how the product prioritizes alerts, how well it correlates related activity, and how much tuning is required to keep noise manageable. A strong EDR distinguishes credible threats from benign activity, not just when something happened. |

| 13. Assess Roadmap and Vendor Stability | Innovation pace and financial health matter. Ask where the vendor is investing, how they plan to evolve detections, and how they are embedding AI into workflows. A strong roadmap signals a long-term partner. |

Our Strongest Defense is a Shared One

Our strongest defense is one built on a common language. The ATT&CK framework provides that language by mapping threats to specific tactics, techniques, and procedures (TTPs), allowing the security community to move forward together.

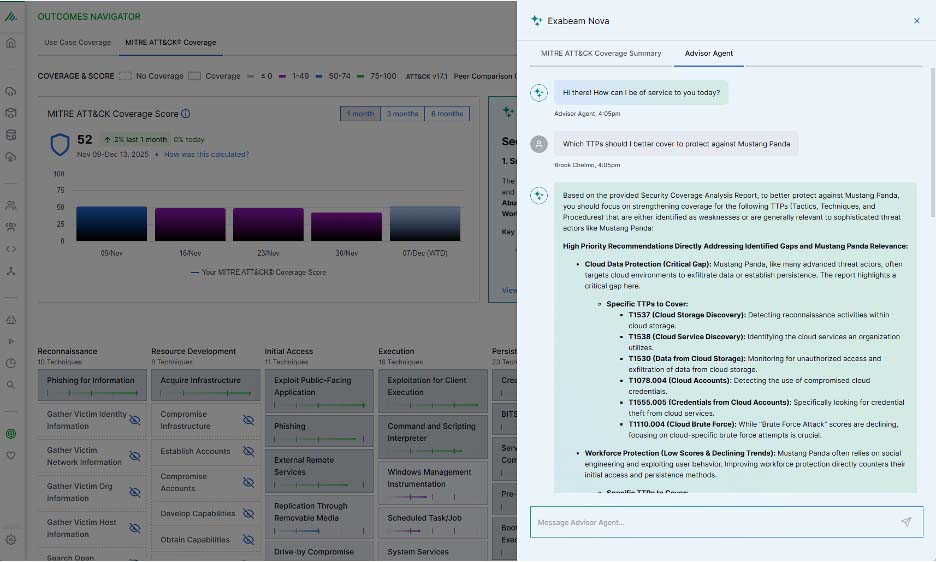

At Exabeam, this principle is fundamental. We use TTPs to enrich every alert with context, helping analysts understand the why behind a threat, not just the what. The log data from our EDR partners is the basis of that security story, which is why we are so committed to supporting both the MITRE Foundation and the EDR vendor community.

To our EDR partners: Let’s rejoin the MITRE ATT&CK Evaluations and recommit to the transparency that makes our industry stronger.

This commitment to a TTP-based framework is also why we built Outcomes Navigator. It allows our customers to visualize their security coverage for either use cases or directly against the ATT&CK framework and identify gaps. Understanding your defense is the first step to improving it. Exabeam Nova provides further insight into how your coverage has improved and where to focus next.

To learn more about how CISOs are using agentic AI like Exabeam Nova, download our white paper, A CISO’s Guide to the New Era of Agentic AI.

Brook Chelmo

Director of Product Marketing | Exabeam | Brook Chelmo is a seasoned cybersecurity strategist and product marketing leader with deep expertise in emerging threats, threat actor behavior, and security technology. He has conducted embedded research with ransomware groups, including direct engagement with Russian cybercriminals, offering rare insights into their operations, motivations, and monetization strategies. Known for delivering award-winning and standing-room-only presentations at global security conferences, Brook helps security teams stay ahead of evolving threats by translating complex threat intelligence into actionable strategies. His work spans product development, threat research, and education, supporting both the advancement of security technology and the global community’s ability to defend against cyber risk.

More posts by Brook ChelmoLearn More About Exabeam

Learn about the Exabeam platform and expand your knowledge of information security with our collection of white papers, podcasts, webinars, and more.