-

- Home

>

-

- Blog

>

-

- InfoSec Trends

Machine Learning SDK for Security Analytics

- Nov 14, 2017

- Derek Lin

- 5 minutes to read

Table of Contents

Once I was asked by an aspiring data scientist what the challenges are in getting into the field of user and entity behavior analytics (UEBA). After all, data scientists have been applying their skills successfully across many industries. Yet, I believe security analytics poses some challenges representing high barriers of entries for a data scientist new to the area. First, there is the obvious need to collect and process the 3Vs (volume, variety, and velocity) of security logs. Second, the lack of the requisite domain expertise on security logs is a unique challenge for an uninitiated security data scientist to break into UEBA. Finally, operationalization of machine learning models is not likely to succeed unless the work is fully integrated with existing security tools. In this blog, I describe an infrastructure with an open security data science platform with its SDK/API that effectively removes the barriers of entry to UEBA for data scientists.

While addressing the 3Vs of security logs is important, a security analytics infrastructure is not merely a data lake where raw data logs are parked and indexed for search. Fast search is critical for post-incident response; UEBA is about pro-active monitoring of events for anomaly detection. UEBA work requires knowing the security meanings of the underlying data before indicators or models can be designed and built. Beyond having the basic capacity of a data lake with fast search, an analytics infrastructure for UEBA must address at least the followings in order to bridge the security knowledge gap for data scientists:

Assigning semantic meanings to data fields, reducing the learning curve

- Many logs from enterprise security tools and products are not easily interpretable; for example, a Windows authentication event log is notoriously difficult to understand.

- Data in logs is often not consistent from version to version. Some logs don’t even come with ready documentation; it takes a pair of trained eyes to decipher the content.

Enriching data with intelligent transformation and context, increasing data values

- Security events need critical enrichment prior to analysis. For example, due to DHCP, events recorded with only IP addresses must be mapped to hostnames before analysis or noisy modeling result can be expected.

- Some low-level events are too granular to be useful and they are best normalized to meta events prior to sensible aggregation. For example, Windows NTLM or Kerberos authentication events are to be lumped together; keeping them separate adds no information but fragmented statistics.

- A goal of UEBA is to identify anomalous user sessions. A session is a collection of relevant activity events. Simply grouping events by users is not sufficient. A session may consist of activities from two or more users, for example, due to account switching activities. Intelligent grouping of events first reduces noise in downstream analytics.

A generic data lake without security knowledge-infused data transformation puts a tremendous burden on data scientists to overcome the steep learning curve in working with the security data. A data lake with data already transformed and context-enriched allows data scientists to quickly generate values for insight, modeling, and ultimately threat detection.

ML SDK from Exabeam

As a security data scientist, I find Exabeam’s analytics platform does all the above well enough to tackle various use cases in the production system. We are opening the analytics platform up with a machine learning (ML) SDK and a set of API so that external data scientists can enjoy the same level of ease in interacting with the value-enriched data. Our goal of the ML SDK is to provide full access to Exabeam’s data lake and database. Levels of its usage include:

Mechanism to build statistical profiles and flag for alerts

Exabeam’s UEBA system already supports five hundred types of histogram-based models defined over known data feeds for statistical profiling. These models enable p-value based analysis for rare or new event detection. To accommodate local or specialized data feeds, a security analyst can already use configuration files to define new statistical profiles, with no code.

Access to transformed and enriched events stored in HDFS-based event store

All data feeds coming into Exabeam’s system are readily parsed, normalized, transformed, and enriched. This bridges the security knowledge gap and eliminates the high learning threshold for those data scientists wanting to work in UEBA but without domain expertise. This is one of the most valuable aspects of the ML SDK.

Access to read and create contexts in the database

Contextual information such as users’ department information are used to add dimensions to alerts. For example, alerts calibrated on the peer context. Context data is stored in the database for reading. Some use cases may desire the creation of new contexts. New machine learning-derived contexts can be written to the database for uses such as alert condition or calibration. For example, an alert should only be triggered in the context where the asset is learned to be a gateway. Learning from data to create new contexts is an important use case for machine learning in security analytics.

Access to the Apache-Spark computing platform

Exabeam’s machine learning use cases are built on Apache Spark. Some examples are described in this June blog and this August blog. External data scientists can enjoy the same level of implementation ease afforded by access to a computing ecosystem based on HDFS, MongoDB database, and Apache Spark engine.

Ability to create alerts

There are two primary use cases for machine learning for UEBA. One is to derive new contexts which I’ve already mentioned. The other is to create alerts for detection, whether it is a new way to detect malicious domains from DNS logs or to identify machines exhibiting unusual behavior over netflow logs. Via an API, an alert created by a data scientist with an algorithm running on Apache Spark is seamlessly integrated with other Exabeam-native alerts. Having alerts all within the same pane of glass for security analysts is an important user-interface requirement for operationalization of externally created alerts.

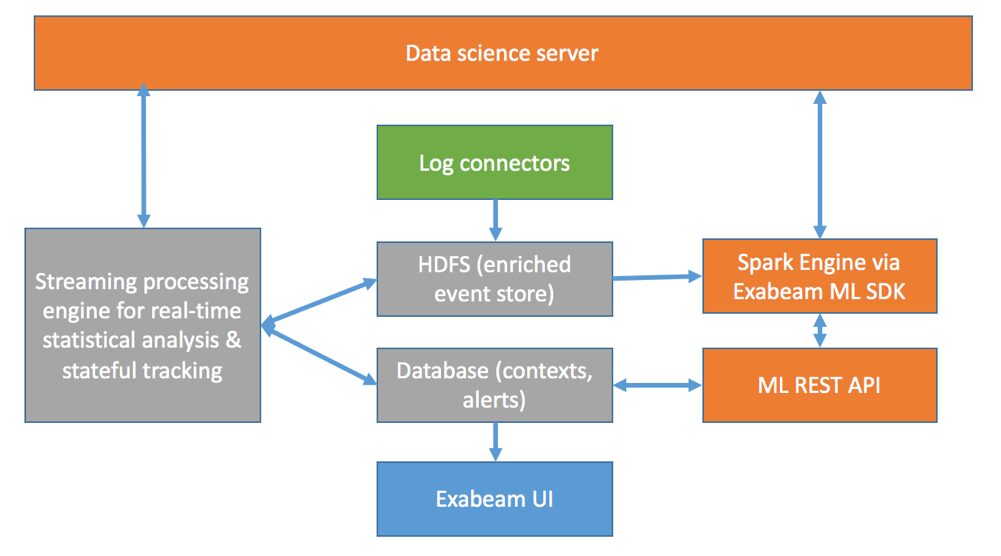

A Spark-enabled architecture

The figure below shows components of Exabeam’s security analytics infrastructure. Data is ingested to HDFS from log connectors. Exabeam’s streaming processing engine, together with a MongoDB database, serves real-line anomaly detection based on statistical analysis. Here new or existing histogram-based models are fully configurable via configuration files. This is also where events are enriched in real time for HDFS-based event store. Exabeam’s Spark SDK allows development of batch-oriented use cases such as context estimation and detection use cases. The coordination between the real-time and batch learning and scoring are controlled via a data science server. Output of the ML algorithms are seamlessly integrated to the database which stores the newly ML-derived contexts, as well as the external ML-based alerts which are seamlessly integrated with the native alerts to ensure a uniform user experience.

In summary, Exabeam provides a security knowledge-infused data lake which substantially lowers the barriers of entry to security analytics. Exabeam’s ML SDK/API provides access to the Spark computing platform and the enriched data in HDFS and database, allowing data scientists to create additional value from their data.

- Tags

- Data Science

Derek Lin

Chief Data Scientist | Exabeam | Derek Lin is the Chief Data Scientist at Exabeam, building products to help security teams accelerate and improve threat detection, investigation and response (TDIR) by adding intelligence to their existing security tools. His current and prior machine-learning research interests include behavior-based security analytics, risk-based banking fraud detection, and speech and language recognition. He holds numerous patents and authors papers in areas of fraud detection and cybersecurity.

More posts by Derek LinLearn More About Exabeam

Learn about the Exabeam platform and expand your knowledge of information security with our collection of white papers, podcasts, webinars, and more.