One of the things I like best about Exabeam Data Lake is its query feature. In just a few seconds, you can enter a search term and get all the information you need about security risks affecting your network.

As an avid Data Lake user, I’ve spent quite a bit of time using its query function. Thanks to some new built-in functionality from Exabeam and my own experimentation, I’ve found 10 query tips that can help you use Data Lake to its fullest.

-

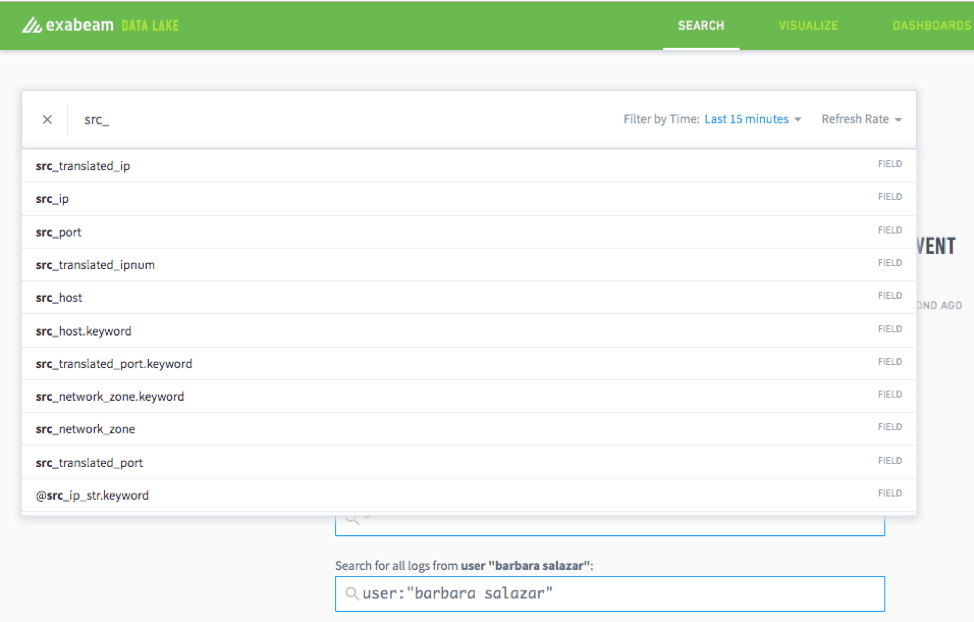

Auto-populate

Through a survey of its users, Exabeam determined that auto-population was a search feature many of us wanted. Thanks to this new addition, you can simply input a letter or a few letters of the name, and related search terms automatically appear.

Figure 1: Data Lake’s auto-populate search feature suggests related search terms.

-

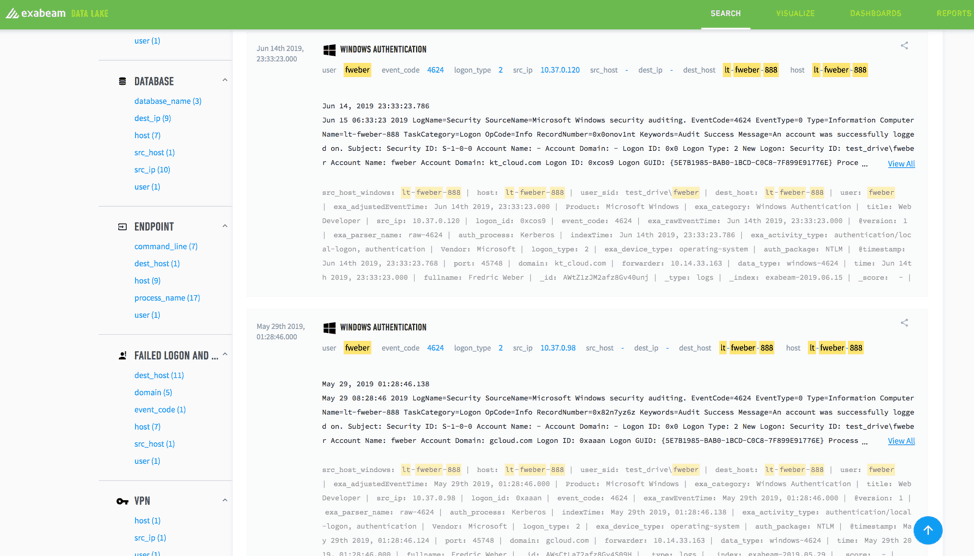

Review sample logs

Sometimes you have a new log source with no way of knowing how the data is formatted inside. With Data Lake, as long as you have a general idea of what’s inside that log, you can query those search terms and quickly review sample logs, thereby making it easier to parse the data inside.

Figure 2: After integrating a new log source, you can query search terms and quickly review sample logs, making it easier to parse the data inside.

-

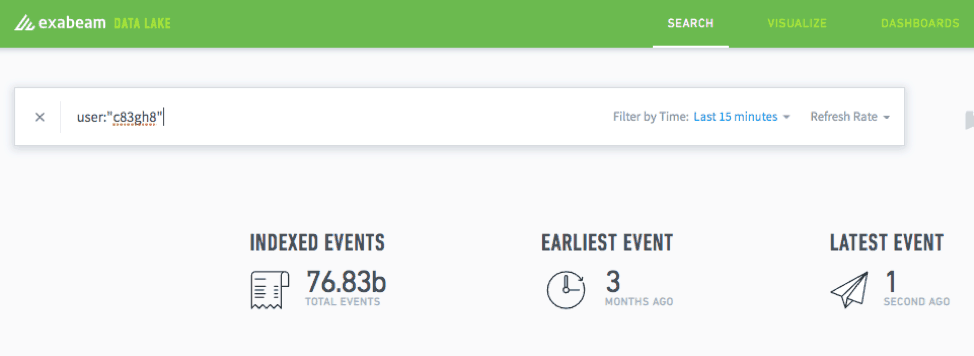

Use quotes for alphanumeric queries

When you’re querying an alphanumeric string, you need to put quotes around the entire string to avoid the system trying to search each letter or number individually.

Figure 3: When querying an alphanumeric string, add quotes to the string to narrow the search.

-

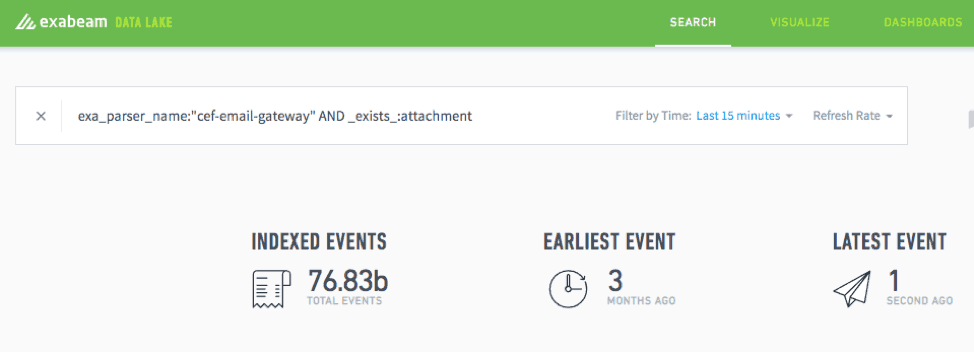

Use the “is not null” argument

The _exists_: function allows you to query on a field only when it contains data. The argument you use here is the field itself. For example, if you were looking specifically for emails with attachments, you could use the “is not null” argument to separate those results out. To do this, simply type the following:

_exists_:

Figure 4: Use the _exists_: function to run queries on a field when it contains data.

-

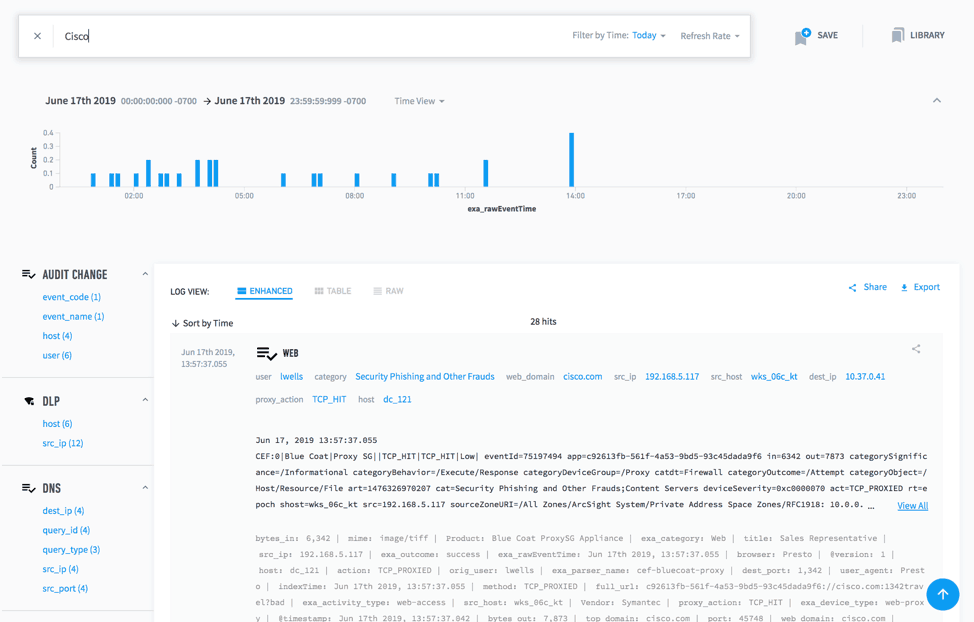

Query broadly, then narrow down

You’ll get logs back that you can then review to see if any of the new fields should have a parser. If you aren’t sure what the name of the field is, you can simply use the auto-populate feature to track it down. An example of this would be if you just added print logs. Just by searching “print” in the raw for a few minutes will probably identify the logs in which you are interested. Similarly, if you searched for “Cisco” you could quickly identify fields in that data by which you could either tighten up your query syntax or perform analysis on this Cisco or printer data.

Figure 5: Submit a broad query then narrow the results to filter logs for any of the new fields that should have a parser.

-

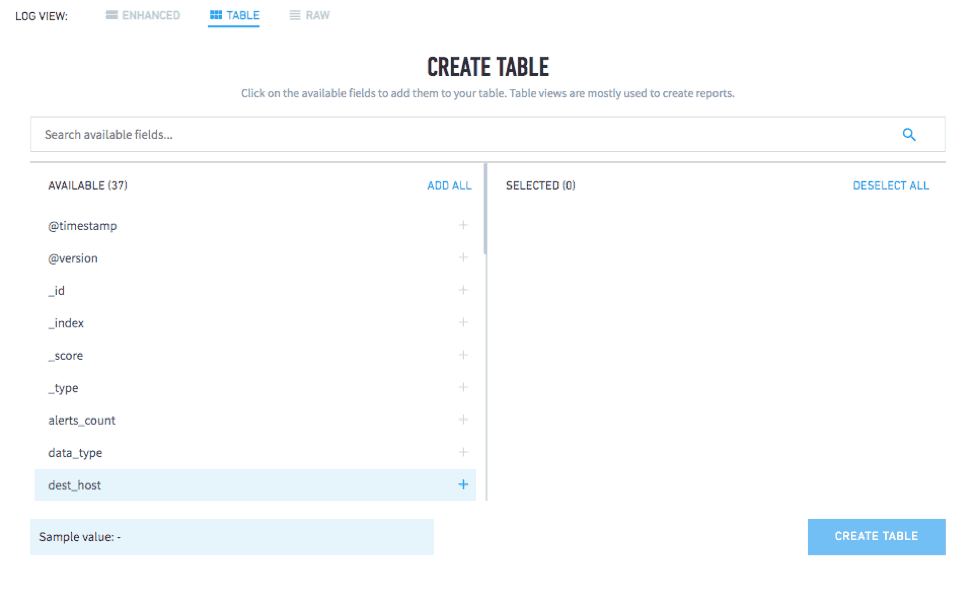

Add parsed fields to a data table

One of the best features of Data Lake is that you can now create a data table out of your parsed fields. This makes it easier to export the information, as well as providing a visual breakdown that is easy for the layperson to understand.

Figure 6: Create a data table from your parsed fields to easily export the information and create a scannable visual breakdown.

-

Create visualizations

From data tables, you can then easily generate popular visualizations like pie charts and bar graphs. This comes in handy if someone in another department asks for a specific type of information, such as how many people are using Power BI within your network. In a matter of seconds, you can pull a chart and send it over.

Figure 7: Create visuals with data tables to share information across your organization.

-

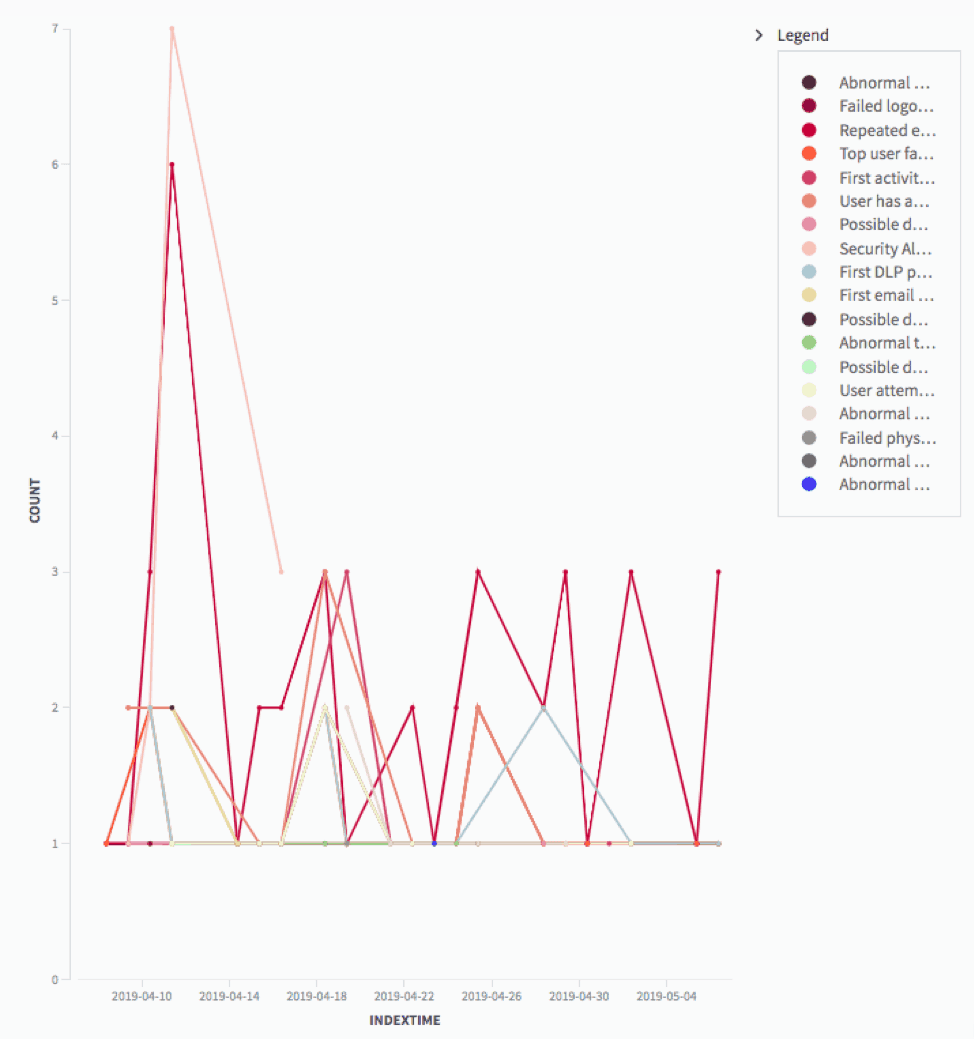

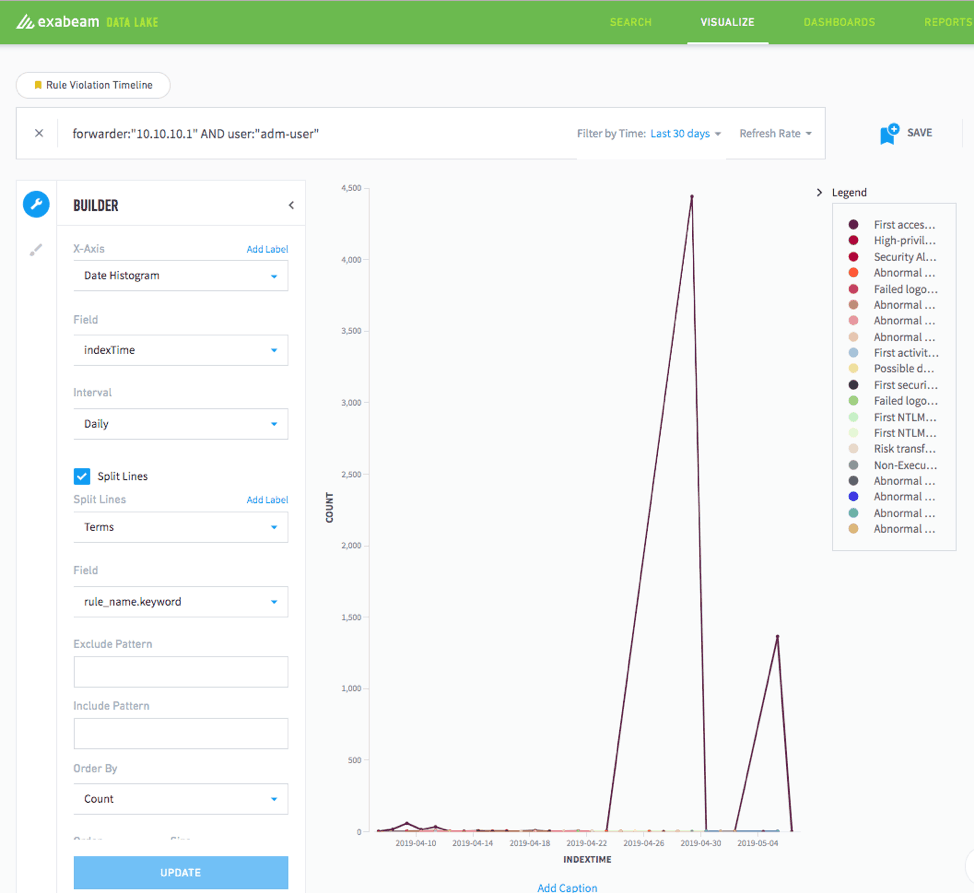

See rule violations

If you have Advanced Analytics configured to send rule triggers to Data Lake, you can quickly analyze data related to rule triggers and visualize those results. This makes identifying patterns very easy. It will display outliers and show habitual patterns of users. Data Lake makes this easy by letting you query by user, rule, asset, or whatever field you choose. You can also create visualizations to show trends across all rule triggers.

Figure 8: Configure Advanced Analytics to send rule triggers to Data Lake to identify patterns and create visuals to illustrate emerging trends.

-

Search for noncompliant activities

You can head off trouble fairly early on if you can identify areas of noncompliance within your own organization. Data Lake, when rules are piped in from Advanced Analytics, allows you to skip over all of the normal data in sessions. It lets you run metrics on different types of non-compliant activities over time. With Threat Hunter in Advanced Analytics, it’s possible to query for combinations of events that are based on rule triggers. With Data Lake it’s possible to skip over all the compliant events, which are much more numerous, and get right to the badness.

-

Dormant user rule

One danger many organizations face comes from dormant user accounts. Data Lake lets you set a dormant user rule to alert you when an account that hasn’t been used in a while has sudden activity. Since this type of event can often signal that your network has been compromised, this type of information is crucial. The same search functionality can also help you see suspicious activity like numerous attachments from one user.

When combined with Data Lake’s advanced reporting capabilities, these features can help you stay on top of your network’s security. You’ll avoid security breaches and maintain the integrity of your infrastructure. Best of all, when a member of your business’s leadership team wants information on that activity, you’ll be able to show that you have complete oversight over the daily happenings on your network.

Tim Lowe is a guest contributor.

Similar Posts

Recent Posts

Stay Informed

Subscribe today and we'll send our latest blog posts right to your inbox, so you can stay ahead of the cybercriminals and defend your organization.

See a world-class SIEM solution in action

Most reported breaches involved lost or stolen credentials. How can you keep pace?

Exabeam delivers SOC teams industry-leading analytics, patented anomaly detection, and Smart Timelines to help teams pinpoint the actions that lead to exploits.

Whether you need a SIEM replacement, a legacy SIEM modernization with XDR, Exabeam offers advanced, modular, and cloud-delivered TDIR.

Get a demo today!